Original source: Fox Agency

This video from Fox Agency covered a lot of ground. 3 segments stood out as worth your time. Everything below links directly to the timestamp in the original video.

If no one's salary depends on an ethical outcome, the ethical commitment is theatre. Schmarzo's argument is that AI bias isn't just a model problem — it's an incentive problem.

Ethics Must Be Built Into Compensation Systems, Not Just Mission Statements, Schmarzo Argues

The structural issue, as Schmarzo frames it, is that organisations routinely profess commitments to diversity, sustainability, and ethical AI while tying no compensation metric to any of them — a contradiction he distils into a maxim borrowed from a former Procter & Gamble CEO: 'you measure what you reward.' His practical prescription is that KPIs must expand to account not only for value created but for the costs of failure, including the asymmetric risks buried inside AI models. In hiring algorithms, false positives — candidates hired and later dismissed — generate performance data organisations can learn from, but false negatives, the qualified candidates never hired, are systematically ignored, quietly entrenching bias with every iteration.

What this exposes is a deeper design flaw: AI models optimised only on the errors they can see will compound the errors they cannot, producing systems that become more confident and less accurate over time. The implication for any organisation deploying AI in consequential decisions is that continuous learning requires deliberate instrumentation of absence, not just presence.

"Don't tell me as a company you care about diversity but no one gets paid on it — because that's BS."

Schmarzo Proposes 'Economics of Ethics' Framework to Embed Moral Constraints Directly Into AI Models

Working on what he describes as potentially his defining book, Schmarzo is developing a five-stage AI and data literacy framework aimed not at specialists but at high school and middle school students — a deliberate effort to broaden who participates in AI governance. The book's animating challenge is codification: unless ethical principles can be expressed in terms precise enough to enter an AI utility function, they remain decorative rather than operative. His proposed solution, which he calls the 'economics of ethics,' applies economic measurement concepts to ethical constraints, an approach he is currently testing with a small number of clients.

The real question is not whether AI can be made ethical, but whether organisations will wait for regulators to mandate it or act before the architecture is already fixed. Schmarzo's argument is that proactive ethics — the Good Samaritan standard — must replace the passive 'do no harm' posture that currently dominates corporate AI discourse.

"Ethics is not a passive activity — it's a proactive activity."

Big Data's Power Lies in Granularity, Not Volume — and That Distinction Changes Everything

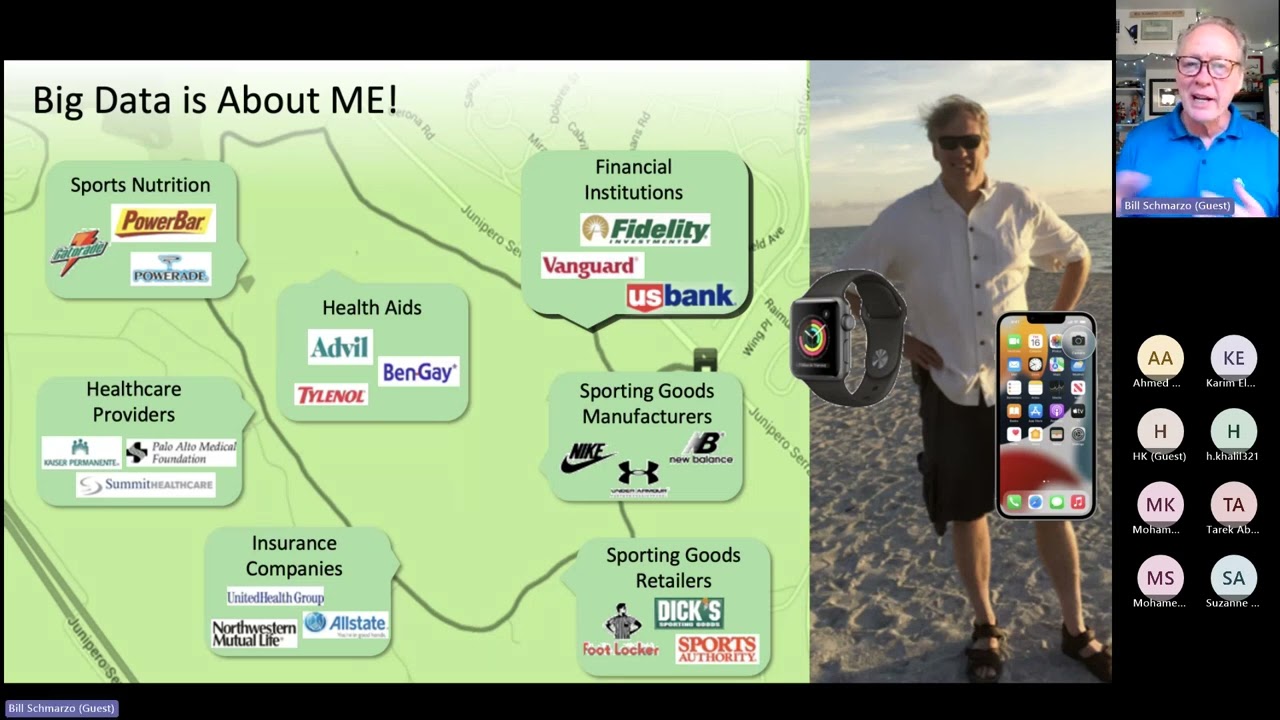

Schmarzo reframes Big Data not as a storage problem but as a resolution problem: where earlier analytics operated at aggregate levels — store sales by week, incidents by month — modern data infrastructure can descend to individual-level behavioural signatures, what he terms 'Nanoeconomics.' The concept, extending the macro-micro ladder to a third tier, holds that individual humans, patients, students, or industrial machines each generate usage patterns from which predicted behavioural and performance propensities can be derived and acted upon with precision.

The structural implication is significant: if granularity rather than scale is the operative variable, then the competitive and ethical stakes of data collection are far higher than conventional 'Big Data' discourse acknowledges. Individualised medical curricula, personalised education pathways, and precision resource allocation in housing all become technically tractable — as does, Schmarzo concedes, surveillance at a scale that makes aggregate data look benign by comparison.

"Big Data is not about volume — it's about granularity."

Summarised from Fox Agency · 31:22. All credit belongs to the original creators. Streamed.News summarises publicly available video content.