Original source: Bill Schmarzo

This video from Bill Schmarzo covered a lot of ground. 1 segment stood out as worth your time. Everything below links directly to the timestamp in the original video.

Algorithmic decision-making is already reshaping life chances in employment, lending, and criminal justice — mostly without the knowledge of those affected. The question is whether oversight can catch up before the damage compounds.

AI's Benefits Flow Unevenly as Opaque Models Already Shape Hiring, Lending, and Justice

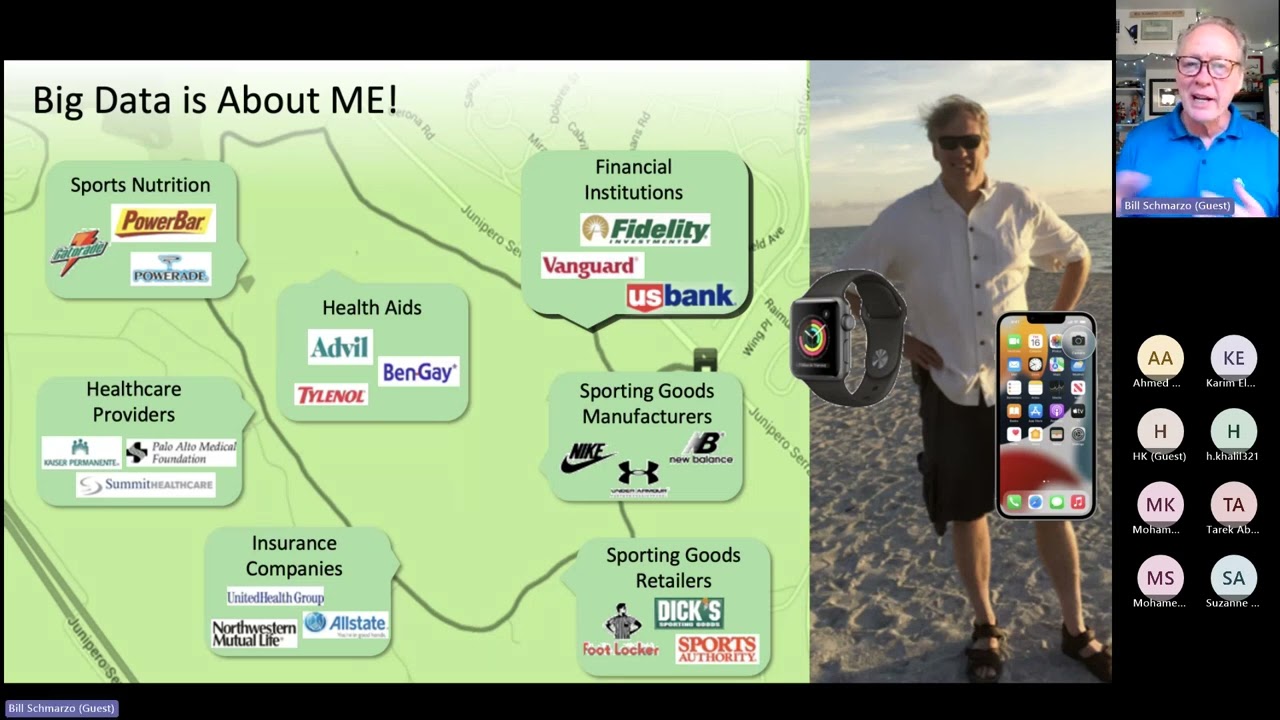

What this exposes is not a future risk but a present condition: algorithmic systems — built on incomplete, inaccurate, and structurally biased data — are already determining employment, housing, and criminal justice outcomes for millions of people who neither know they are subject to these models nor have any meaningful recourse against them. Cathy O'Neil's phrase 'weapons of math destruction' captures the asymmetry precisely: the opacity that insulates these systems from scrutiny is the same opacity that concentrates their harm among the least powerful.

The structural issue here is that AI literacy has been treated as a specialist concern when it is, in fact, a civic one — and the longer that framing persists, the wider the gap between those who deploy these systems and those who absorb their consequences.

"There are some people who are going to win, but there are going to be some people who lose — and in order to make certain that we don't create yet more divide in our countries and our society, we need to find a way to make sure that everybody is brought into this process."

Summarised from Bill Schmarzo · 1:07:12. All credit belongs to the original creators. Streamed.News summarises publicly available video content.